上 Adashare Learning What To Share For Efficient Deep Multi-task Learning 230921-Adashare Learning What To Share For Efficient Deep Multi-task Learning

2

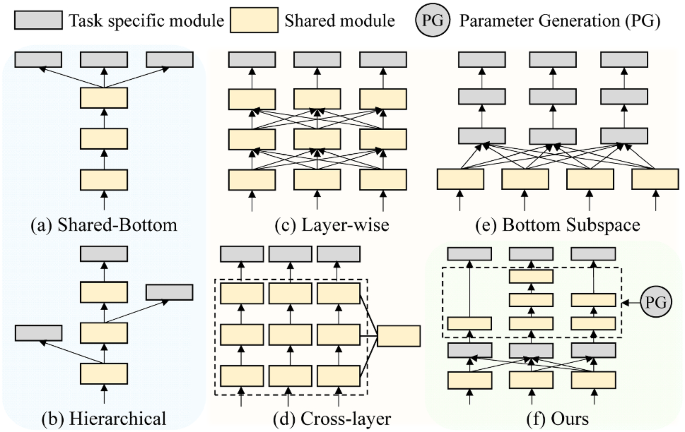

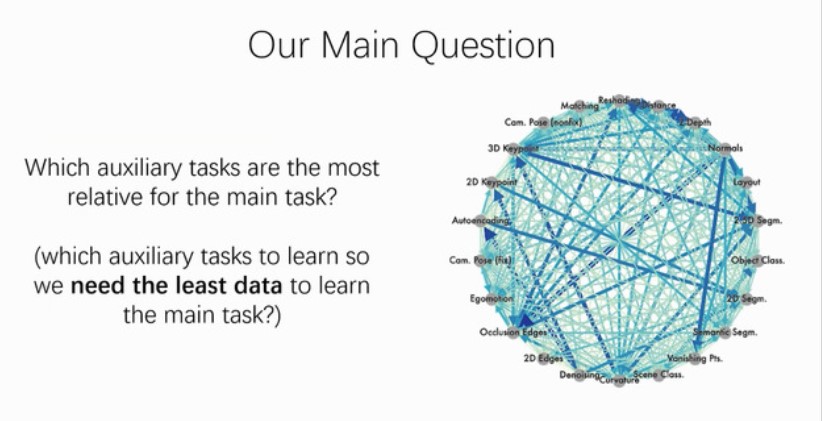

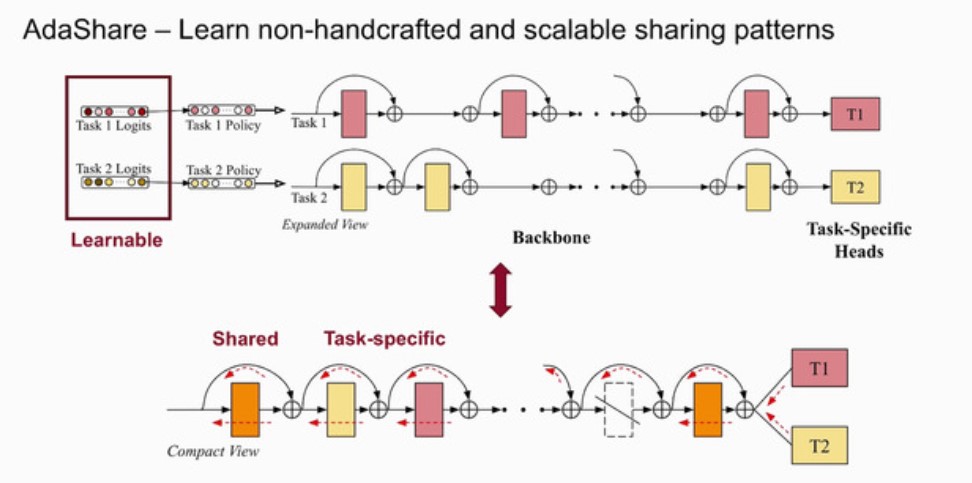

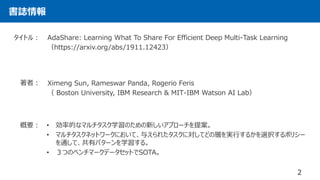

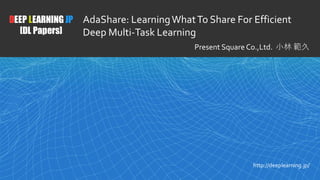

Multitask learning is an open and challenging problem in computer vision The typical way of conducting multitask learning with deep neural networks is either through handcrafted schemes that share all initial layers and branch out at an adhoc point, or through separate taskspecific networks with an additional feature sharing/fusion mechanism Unlike existing methods, we This work proposes an adaptive sharing approach, called AdaShare, that decides what to share across which tasks for achieving the best recognition accuracy, while taking resource efficiency into account Multitask learning is an open and challenging problem in computer vision

Adashare learning what to share for efficient deep multi-task learning

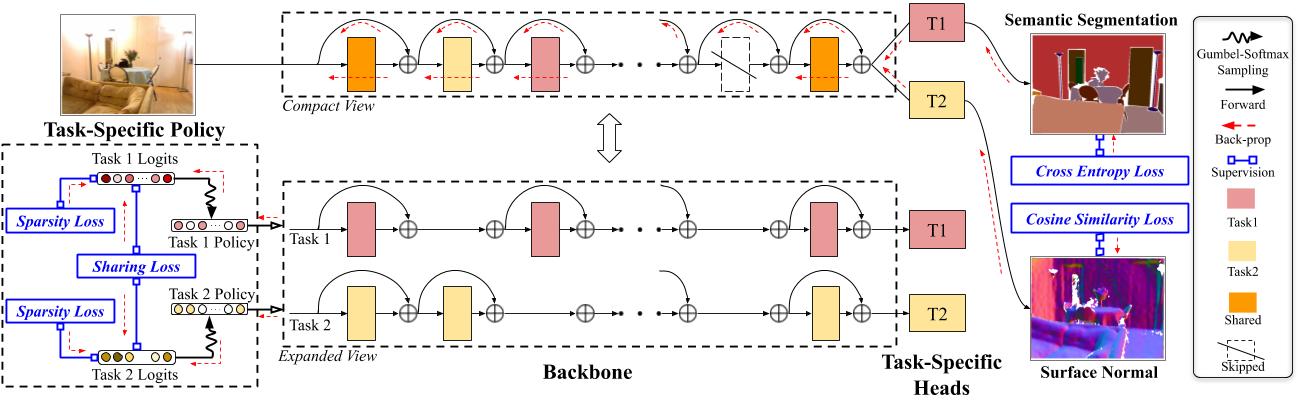

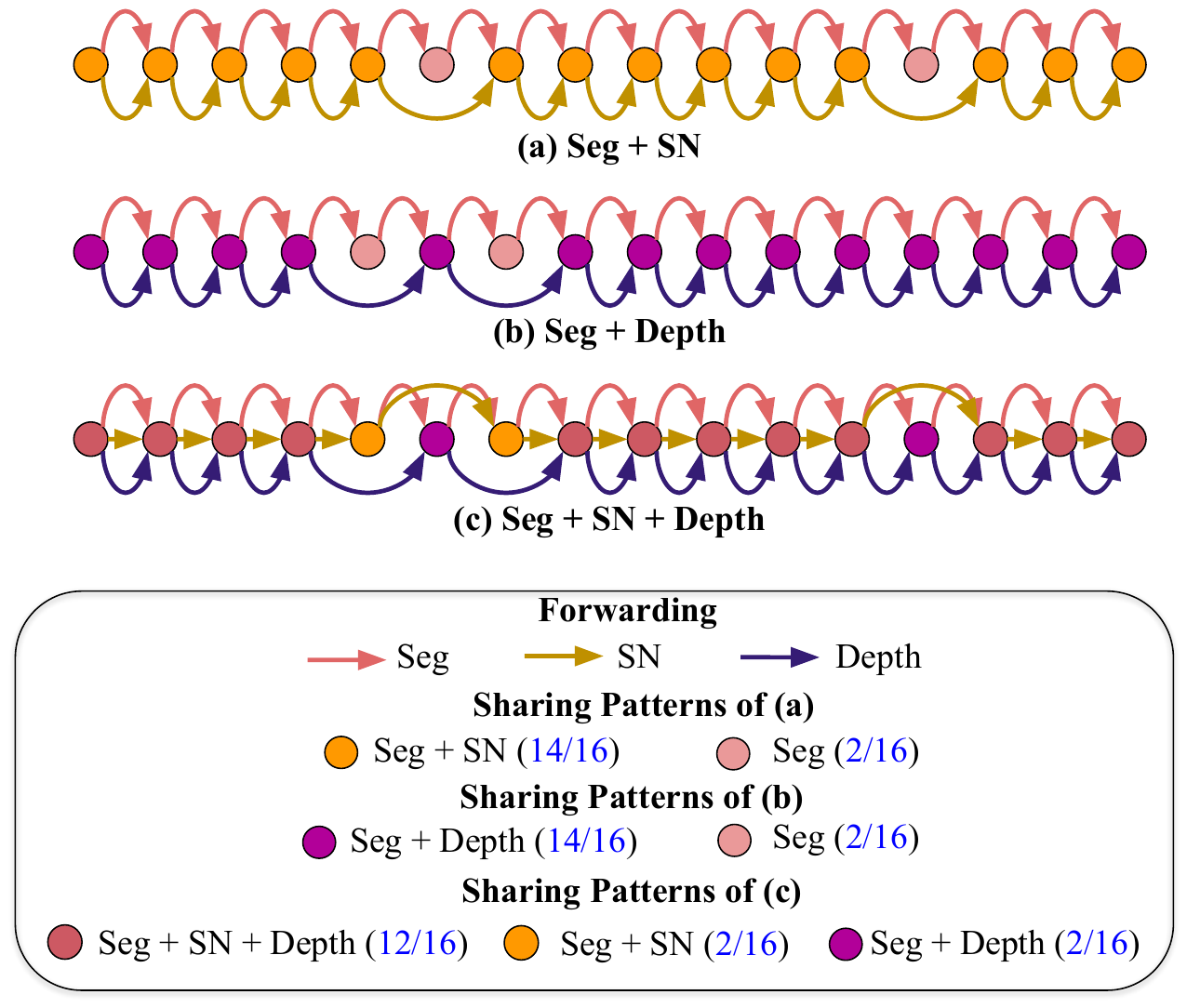

Adashare learning what to share for efficient deep multi-task learning-Task specific reinforcement learning neural network stochastic gradient descent feature sharing More (10) Weibo We present a novel and differentiable approach for adaptively determining the feature sharing strategy across multiple tasks in deep multitask learningTable 1 Hyperparameters for NYU v2 2task learning, CityScapes 2task learning, NYU v2 3task learning and TinyTaskonomy 5task learning We provide the learning rates (weight lr and policy lr) including seg, sn, depth, kp and edge as the task weightings for Semantic Segmentation, Surface Normal Prediction, Depth Prediction, Keypoint Prediction and Edge Detection respectively

Adashare Learning What To Share For Efficient Deep Multi Task Learning Arxiv Vanity

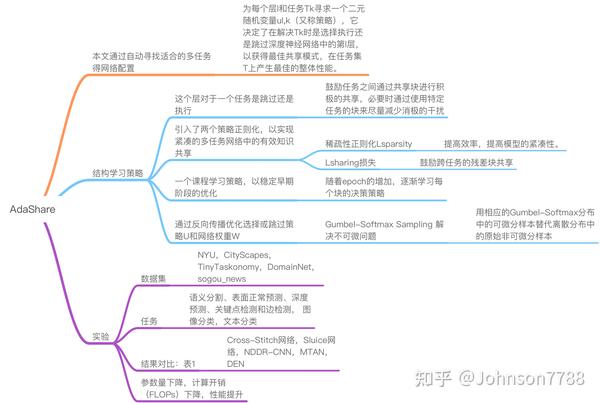

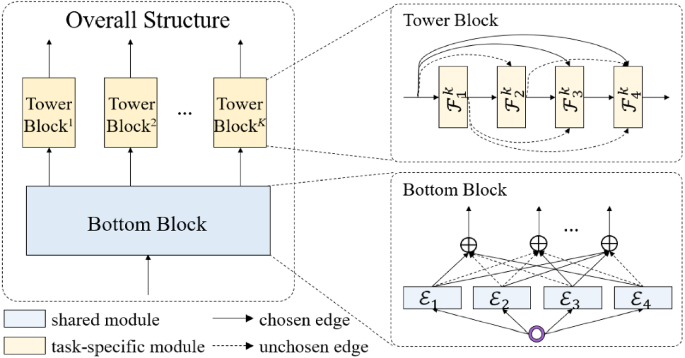

Adashare Learning What To Share For Efficient Deep Multi Task Learning Abstract Multitask learning is a promising machine learning branch, which aims to improve the generalization of the prediction models by sharing knowledge among tasks Most of the existing multitask learning methods rely on predefined task relationships and guide the learning process of models by AdaShare is a novel and differentiable approach for efficient multitask learning that learns the feature sharing pattern to achieve the best recognition accuracy, while restricting the memory footprint as much as possible Our main idea is to learn the sharing pattern through a taskspecific policy that selectively chooses which layers to execute for a given task in the multitaskHongyan Tang, Junning Liu, Ming Zhao, and Xudong Gong Progressive Layered Extraction (PLE) A Novel MultiTask Learning (MTL) Model for Personalized Recommendations

Deep Learning JP Discover the Gradient Search home members;原文:AdaShare Learning What To Share For Efficient Deep MultiTask Learning 作者 Ximeng Sun1 Rameswar Panda2 论文发表时间: 年11月 代码:GitHub sunxm2357/AdaShare AdaShare Learning What To Share For Efficient Deep MultiTask Learning Deep multitask learning Recently, deep learning has been integrated with multitask learning to boost performance by sufficient representation ability However, learning multiple tasks simultaneously based on the neural network architecture poses a new neural network design and optimization challenges

Adashare learning what to share for efficient deep multi-task learningのギャラリー

各画像をクリックすると、ダウンロードまたは拡大表示できます

2 |  2 |  2 |

2 | 2 |  2 |

2 | 2 |  2 |

「Adashare learning what to share for efficient deep multi-task learning」の画像ギャラリー、詳細は各画像をクリックしてください。

2 |  2 |  2 |

2 |  2 |  2 |

2 | 2 |  2 |

「Adashare learning what to share for efficient deep multi-task learning」の画像ギャラリー、詳細は各画像をクリックしてください。

2 | 2 |  2 |

2 |  2 | 2 |

2 | 2 | 2 |

「Adashare learning what to share for efficient deep multi-task learning」の画像ギャラリー、詳細は各画像をクリックしてください。

2 | 2 |  2 |

2 |  2 |  2 |

2 | 2 |  2 |

「Adashare learning what to share for efficient deep multi-task learning」の画像ギャラリー、詳細は各画像をクリックしてください。

2 |  2 | 2 |

2 |  2 |  2 |

2 |  2 |  2 |

「Adashare learning what to share for efficient deep multi-task learning」の画像ギャラリー、詳細は各画像をクリックしてください。

2 | 2 |  2 |

2 | 2 |  2 |

2 |  2 | 2 |

「Adashare learning what to share for efficient deep multi-task learning」の画像ギャラリー、詳細は各画像をクリックしてください。

2 | 2 | 2 |

2 |  2 |  2 |

2 |  2 |  2 |

「Adashare learning what to share for efficient deep multi-task learning」の画像ギャラリー、詳細は各画像をクリックしてください。

2 |  2 | 2 |

2 | 2 | 2 |

2 | 2 |  2 |

「Adashare learning what to share for efficient deep multi-task learning」の画像ギャラリー、詳細は各画像をクリックしてください。

2 | 2 |  2 |

2 | 2 |  2 |

2 |  2 |  2 |

「Adashare learning what to share for efficient deep multi-task learning」の画像ギャラリー、詳細は各画像をクリックしてください。

2 |  2 | 2 |

2 | 2 | 2 |

2 | 2 |  2 |

「Adashare learning what to share for efficient deep multi-task learning」の画像ギャラリー、詳細は各画像をクリックしてください。

2 | 2 |  2 |

2 |  2 | 2 |

2 |  2 |  2 |

「Adashare learning what to share for efficient deep multi-task learning」の画像ギャラリー、詳細は各画像をクリックしてください。

2 |

The typical way of conducting multitask learning with deep neural networks is either through handcrafted schemes that share all initial layers and branch out at an adhoc point, or through separate taskspecific networks with an additional feature sharing/fusion mechanism Unlike existing methods, we propose an adaptive sharing approach, called \textit {AdaShare}, thatFigure 6 Change in Pixel Accuracy for Semantic Segmentation classes of AdaShare over MTAN (blue bars) The class is ordered by the number of pixel labels (the black line) Compare to MTAN, we improve the performance of most classes including those with less labeled data "AdaShare Learning What To Share For Efficient Deep MultiTask Learning"

Incoming Term: adashare learning what to share for efficient deep multi-task learning,

コメント

コメントを投稿